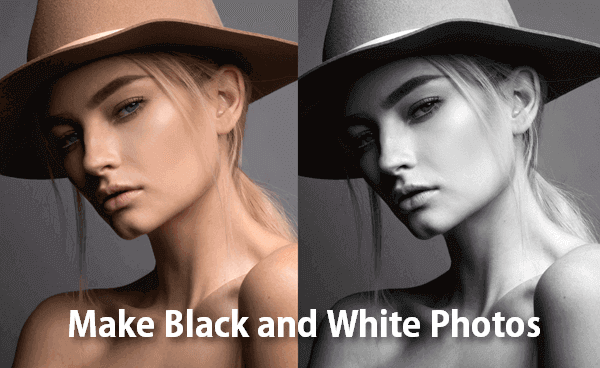

To test it, we took a photo of a small pumpkin and removed the color using Photoshop. If you don't like any of the preset color filters, you can click the pencil icon to edit the caption yourself, which guides the colorization model using a text prompt. "One model creates the text and the other takes the image and the text to generate the colorization."Īfter you upload an image, the site's sleek interface provides an estimated caption (description) of what it thinks it sees in the picture. "I’ve made a custom AI model that uses the image and text to generate a colorization," Wallner replied. We asked Wallner what kind of back-end technology runs the site, but he didn't go into specifics. Palette.fm uses a deep-learning model to classify images, which guides its initial guesses for the colors of objects in a photo or illustration. In sum, grayscale is most appropriate where color contrast itself is high.Further Reading Artist uses AI to generate color palettes from text descriptions Because white and yellow are relatively similar (that is, low contrast colors), we should hesitate about grayscaling our images. In this case, we should be weary of applying grayscale. Here, color also matters: the middle of the road contains yellow lines, and the edges of the road contain white lines.

Showing our models grayscaled images will still enable it easily distinguish between black and white players.Ĭonsider building a Tensorflow model to aid a self-driving car with lane detection. Even though color matters in this case, our colors of interest (black and white.), contain strong contrast. But we needn't be too concerned about applying grayscale as a preprocessing step. Here, it is apparent color matters for our model: one player is black pieces and the other is white pieces.

Imagine you're building a deep learning model in Keras to detect and classify chess pieces. Our answer is fairly intuitive, but with nuance: when we believe color provides meaningful signal to a model and colors are fairly similar in their appearance, we should be cautious to use grayscale. (*presumes images have been stored and fed to the model as single-channel images)

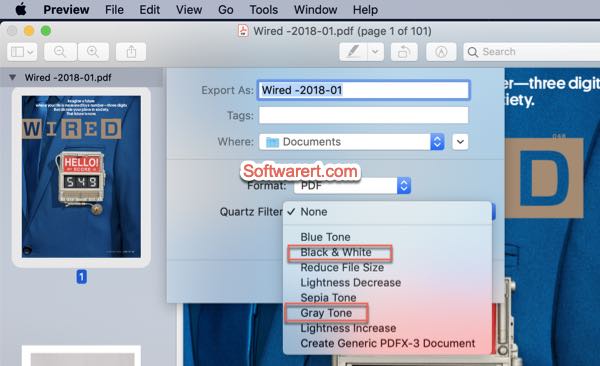

So, if grayscale is more computationally efficient*, perhaps a better question is when should we not use it as a preprocessing step for our machine learning models? Grayscale images, on the other hand, only store values in a single array (black and white), meaning the above calculation only requires a single convolution to be calculated. As opposed to doing a single convolution on just one array, PyTorch must perform convolutions on three distinct arrays. So when our neural network sees this, it does convolution on each of the red, green, and blue channels: RGB convolution in action! ( Source)Īs you can see, this adds complexity to our calculation. Our computer "mixes" these on the fly to produce color outputs. When an image is a color image, its stored digitally as an array of red values, blue values, and green values. So where does color come from?įundamentally, color is generated from mixing red, green, and blue in various proportions. Images are nothing but numeric values ranging from 0 to 255 stored in neat arrays for our computer to display saturation. Example preprocessing and augmentation steps available in Roboflow. This post is part of a series of images on preprocessing and augmentation.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed